-

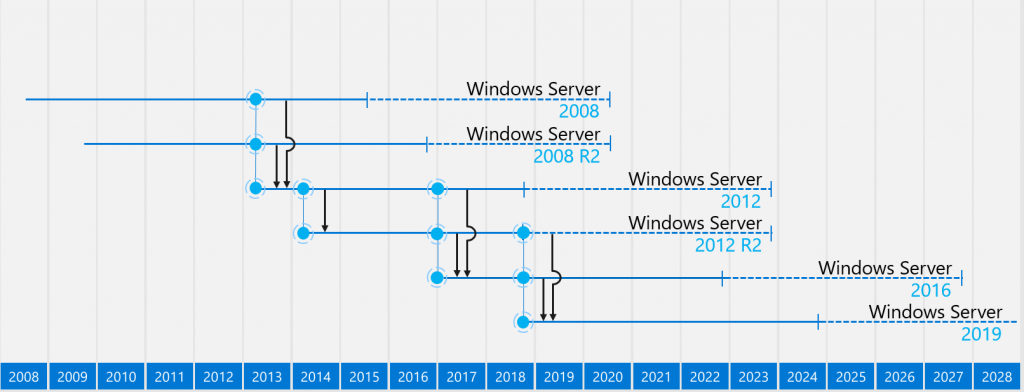

In-Place Upgrades for Cloud Servers

There are several reasons why your legacy VMs running in the cloud may benefit an in-place OS upgrade: end-of-support, compatibility, or enhanced security. It’s always recommended to deploy a new server and migrate the app whenever possible, but many organizations are carrying technical debt that prevents app migration or refactoring to another service. Some examples…

-

How To Migrate Your WordPress Site to an Azure App

Update 2/25/2022 – A new and improved App Service for WordPress has been released with better performance and easier configuration to run on Azure. More info is available from Microsoft here. WordPress powers nearly 40% of all websites on the Internet. This is a simple guide on how run your WordPress site as an…

Read more about How To Migrate Your WordPress Site to an Azure App

-

How To Run Folding@home on Azure Spot VMs to Help Fight COVID-19

Quickstart If you want to skip the details and deploy this solution in Azure, here is a link to my GitHub repo with everything used in this post: https://github.com/covid19folding/AzureSpotVMWorker Overview Azure Spot VMs are a special offering in Azure that offer major savings over traditional VMs (up to 90% sometimes!), but do not come with…

Read more about How To Run Folding@home on Azure Spot VMs to Help Fight COVID-19

-

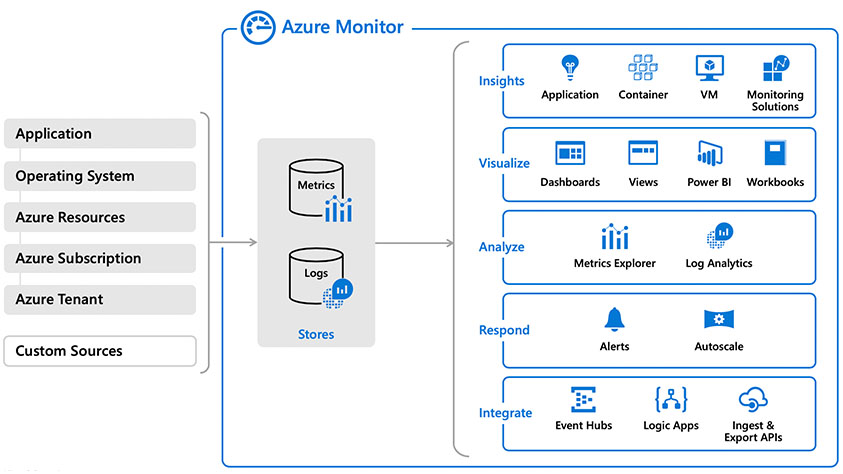

Building a Cloud-Based Monitoring Solution With Azure Monitor and Log Analytics

Contents Intro Overview Log Analytics Setup Agent Deployment Scripted Agent Deployment Solutions Log Queries Alerts Monitor Metrics Summary As organizations transition from server-based infrastructure to containers, serverless computing, and PaaS/SaaS offerings in the cloud, our monitoring and operations tools must evolve, as well. Every organization has different needs and unique requirements to support their applications…

Read more about Building a Cloud-Based Monitoring Solution With Azure Monitor and Log Analytics

-

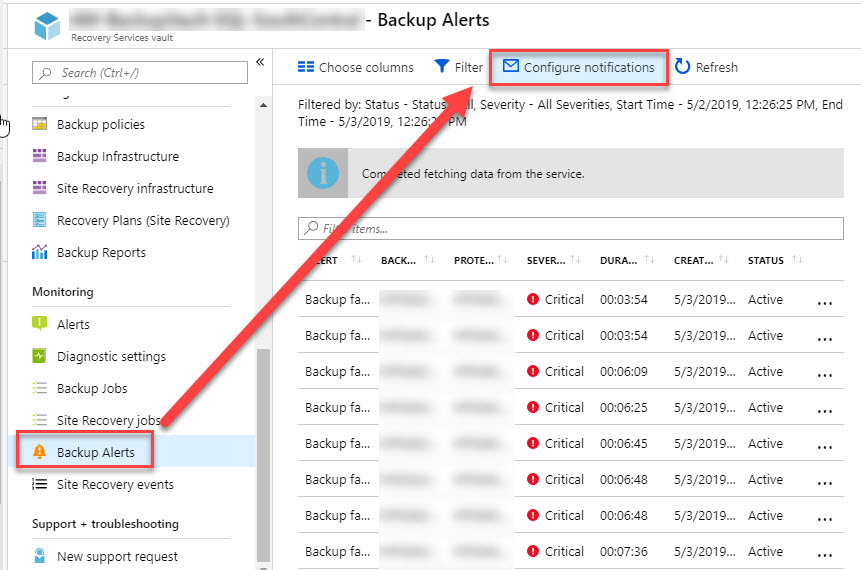

Running IaaS SQL Server in Azure? Back It Up with Azure Backup for SQL Server

With today’s hybrid and cloud-only infrastructure, it’s common to be running SQL Server in the cloud to support System Center and other applications. Of course, every database should be backed up. In this post, I will cover how to do this quickly and painlessly using Azure Backup without any additional infrastructure. In recent months, Microsoft…

Read more about Running IaaS SQL Server in Azure? Back It Up with Azure Backup for SQL Server

-

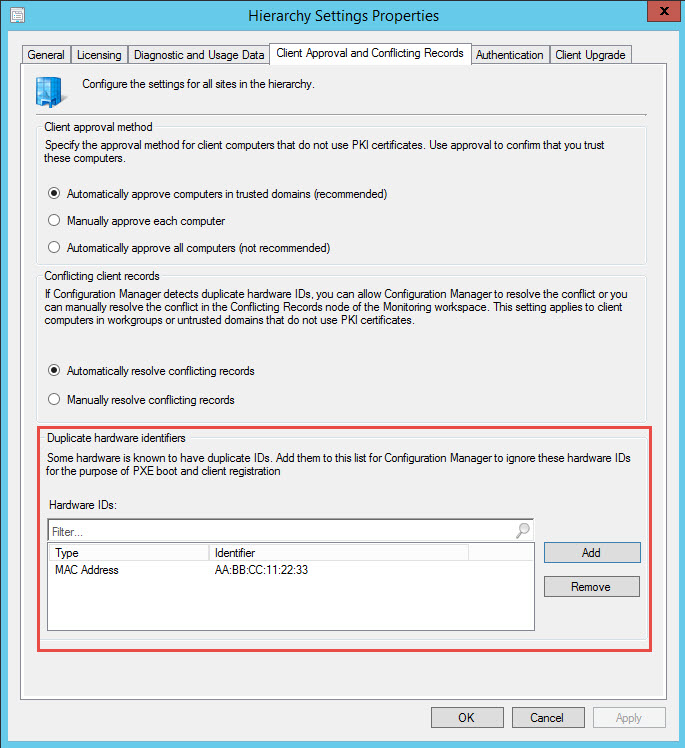

Easily Reuse Network Adapters in SCCM for PXE-based OS Deployment

PXE-booting has been around for a long time and is still used today for OS deployment in Configuration Manager and many other tools. A few years ago (ok, maybe a decade) when laptops/tablets started shipping without Ethernet ports, it threw a wrench in the PXE-booting process used for OS deployment. Not only was the port…

Read more about Easily Reuse Network Adapters in SCCM for PXE-based OS Deployment